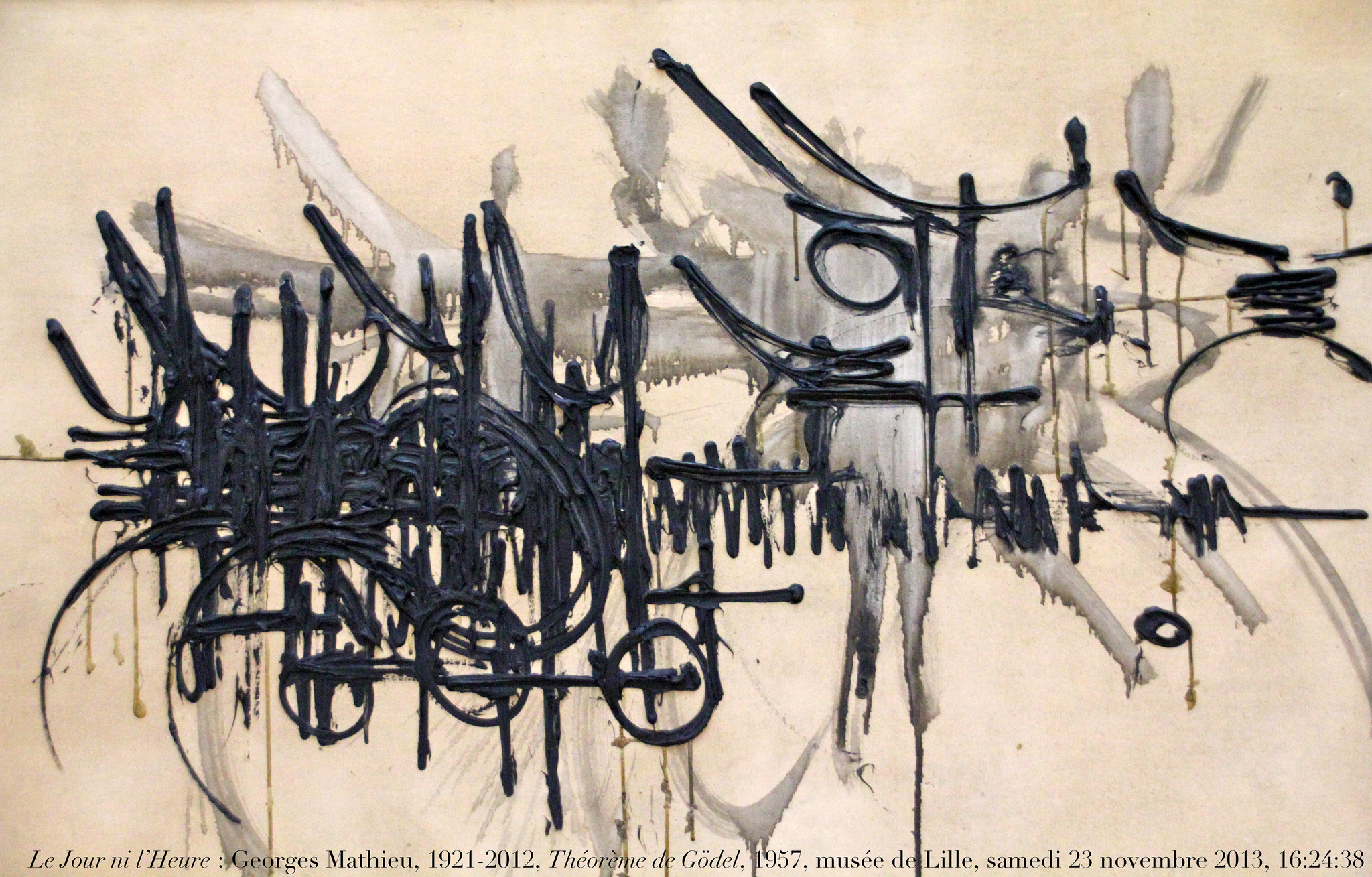

Gödel’s incompleteness theorem is used by both advocates and adversaries of strong AI to show that computers can(not) perform the same feats as humans. This article extends the construction through which Gödel proved his theorem, in order to allow a broader interpretation, showing that neither side has exploited its arguments to the fullest extend, and that the evidence can never be conclusive.

Dr.ir. C.J.B. Jongeneel & prof.dr. H. Koppelaar, Delft University of Technology, Faculty of Technical Mathematics and Informatics, Section of Knowledge Based Systems

1 Introduction

When Kurt Gödel originally published his incompleteness theorem, in 1931, it came as a shock to the mathematical world, for it showed that in every consistent mathematical system there are statements the (un)truth of which can only be established by an appeal to mathematical intuition, not by rigorous proof within the system under consideration. Gödel also gave a formula by which such statements could be constructed for any consistent formal system. Later, Gödel’s theorem was to play an important role in the so-called AI-debate, which centered on the question: can computers perform the same feats as the human brain or, more popularly, can computers think? Curiously, this question has been answered both with ‘yes’ and ‘no’ on basis of Gödel’s theorem. The aim of this article is to show that neither claim holds under thorough mathematical investigation.

The following text first examines some details of the construction through which Gödel established his theorem. This construction will prove to be vital to the arguments developed in the pro and contra sides in the AI-debate, as is shown in the third and fourth sections. The fifth section offers a discussion of Gödel’s theorem in relation to the AI-debate. By extending the construction of Gödel’s theorem in a new way, this paper points out loopholes in the arguments of both sides, thus adding evidence to previous claims. [JON96, p. 166-167] The final section presents some conclusions.

2 Gödel’s theorem

Kurt Gödel’s On formally undecidable propositions of Principia mathematica and related systems (reprinted in [GOE86]) is one of the most important mathematical articles of all times. It states, briefly, that every formal system contains expressions the truth of which cannot be established using the means that are available in the system. In other words, Gödel proved that every formal system is incomplete (in fact, he proved it for a limited class of formal systems, but his results have later been generalized – see [GOL95] for an up-to-date account). The core of Gödel’s theorem is a mathematical rendering of the paradox of Epimenides the Cretan, who said: “All Cretans are liars”. If the statement is true, it claims to be false, and vice versa.

For his proof Gödel developed a method to enumerate all possible formulas within a certain system, now known as Gödel numbering. This method can be briefly described as follows. Every symbol in the alfabet of the system is given a number. For instance, if the Ascii set is taken, “0” has number 48, “9” has number 57 and “=“ has number 61. Now, it is possible to map all formulas (valid sequences of symbols) on the natural numbers by associating the formula with length k, n1, n2, …, nk to the number 2n1 ¨ 3n2 ¨ … ¨ pknk, pk being the k-th prime number. [GOE86, p157] In the example the expression “0=0” would thus become 248¨357¨548. There are other ways to map formulas on the natural numbers, but the particular mapping chosen is not essential to the proof.

Every sequence of symbols being mapped on a natural number, the question is if there are numbers of which it cannot be established whether they represent a valid formula or not. If so, the axiomatic system constructing all valid formulas is incomplete (for a complete system would be able to establish the truth or untruth of any formula). Gödel proves the incompleteness of axiomatic systems by giving one very cleverly constructed counter-example. Using a sequence of 45 definitions, all of them recursively based on previous definitions, he comes to a formula xBy, meaning “x is a proof of the formula y”. The crucial thing is that Gödel is now able to express notions about a system within that system.

Next, he constructs Bew(x) ≡ ($y)yBx, meaning “there is an y that is a proof of x” or “x is a provable formula”. Bew(x), incidentally, cannot be shown to be recursive. (If you get a $-sign in this: an inverted-E is meant)

Gödel then goes on by defining a class K of natural numbers as follows:

n ε K ≡ ¬(Bew[R(n);n]), R(n) being a formula with one free variable, where all formulas have been arranged into some sequence and R(x) denotes the x-th one. [R(n);n] thus denotes the n-th formula where the free variable has been substituted with the natural number n. Since K is defined in terms of elements that have been defined previously, there must be some formula that decides whether a given natural number belongs to K. All formulas being numbered the one giving K may be called R(q).

The question now is: can a human prove the correctness of [R(q);q]? If so, q would belong to K and it is true that ¬(Bew[R(q);q]), or “[R(q);q] is not a provable formula” – a clear contradiction. On the other hand, if a human could prove [R(q);q] to be incorrect, this would mean q did not belong to K and, yielding (Bew[R(q);q]), or “[R(q);q] is a provable formula” – again, a clear contradiction. Hence, using the methods that are available within the system it is impossible to decide whether [R(q);q] is true or false. However, the matter can be decided in metamathematical terms. For [R(q);q] states about itself “I am not provable” and this indeed has appeared to be the case. So, [R(q);q] is actually true.

Thus, mathematical intuition (in the sense of the Dutch mathematician L.E.J. Brouwer, who found his views strengthened by Gödel’s theorem as opposed to those of Russell and Hilbert, Brouwer’s main adversaries) can answer a question that cannot be corroborated by mathematical proof. This is where the relevance of Gödel’s theorem to artificial intelligence enters the stage. Because of the theorem the Turing Machine (TM), an abstract apparatus subject to a formal system and the theoretical model underlying the computer, cannot establish the (un)truth of [R(q);q], to be called ‘Gödelian formula’ from now on. A human, however, can establish the truth of [R(q);q], because he is aware of the meta-argument.

Before actually turning to this discussion in AI, two remarks need to be made. In the first place, Gödel himself showed that it is impossible to prove the consistency of a formal system within that system. Since the theorem only applies to consistent systems, one can never be sure whether it is actually valid in specific cases. Therefore, here it is only assumed that the systems under consideration are consistent or at least not provably inconsistent. This assumption is fair, because it impedes the human’s reasoning as much as the machine’s, so wherever it causes trouble, it causes the same amount of trouble to both parties.

Secondly, in 1936 Alan Turing proved that there exists no universal method to decide on the truth of any mathematical assertion (reprinted in [DAV65, p116-154]). This means that neither a machine nor a human can be certain on his ability to decide on the truth of a statement that corresponds to a randomly chosen number. The complications caused by Turing’s proof will not be considered further. The discussion will be confined to numbers that have been constructed specifically to correspond to Gödelian formulas, which guarantees that their truth can be proven in a finite amount of time using the meta-argument discussed above (assuming consistency).

3 Gödel’s theorem contra AI

One of the first, in 1950, to notice the implications of Gödel’s theorem for artificial intelligence was Alan Turing in his seminal article Computing machinery and intelligence, where he first posed the question “Can machines think?” (reprinted in [HOF82]). He acknowledged that through the application of the theorem a human could indeed triumph over any machine, but added that it would be impossible to triumph over all machines simultaneously. He did not exploit this argument at length, however.

In fact, the use of Gödel’s theorem as a proof contra strong artificial intelligence is the almost exclusive domain of John Lucas, ever since he published Minds, machines and Gödel in 1961. Lucas took Turing’s argument one step further. Given a certain Turing Machine, Lucas acknowledged, it is easy for a human to construct a Gödelian formula corresponding to [R(q);q]. The TM, being bound to its formalism, is unable to establish the (un)truth of this formula, whereas the human, aware of the meta-argument, knows the formula is true.

However, since the Gödelian formula is derived from a standard procedure, it is possible to program a TM to produce it for a certain other machine. After all, it would be unfair to deny the TM the use of the meta-argument. The new, combined TM, though, has another Gödelian formula attached to it, which a human can produce to show the machine something it cannot decide upon. So, any particular machine, Lucas argues, can be beaten by a human. [LUC61, p118]

Note that Lucas is explicitly confining himself to the case where the human produces a Gödelian formula and the computer is asked to decide on its truth. Of course, one could turn around the roles and let the computer produce a Gödelian number for the human to decide upon. Then the human is not sure to find the answer, because he has no effective procedure to do so. However, the possibility cannot be ruled out either.

4 Gödel’s theorem pro AI

Among the earliest reactions on Lucas’ assertions were those of C.H. Whitley and I.J. Good. The latter mainly attacked Lucas’ bias in fixing a point in time for the test. Lucas’ argument is based on the fact that if the machine is fixed and the human given some extra time for calculations, the human may prove that he is not equivalent to the machine. However, if the decision on equivalence is made just after the machine has been adapted and the human has had no time yet to calculate a new Gödelian formula to prove his point, then the human has no argument. Thus, Good pones, Lucas’ argument is biased towards the human, while actually there is a computational race between human and machine that has no winner. [GOO67]

Since the focus is on mathematical soundness here, this paper is confined to the work of Judson Webb, who has examined Gödel’s theorem in relation to AI in detail. [WEB80] Webb, too, follows the same line of thought, concentrating on the fact that Gödel gives a mechanism for constructing the Gödelian formula.

Using some clever definitions that need not to be elaborated upon here, Webb constructs a Turing Machine Mt (t being the number of the TM) that does exactly what Lucas imagined: if it is given a number q, it calculates the Gödelian formula for Mq according to Gödel’s original recipe. Webb then goes on to show that his Mt can also handle t itself. [WEB80, p230-231] Therefore, Mt closes the computational race. It is possible to have a machine that, without the need of alterations (as in Good’s scenario) can decide on the same formulas as humans can.

As Webb argues, this is also defendable from the metamathematical point of view: if a human wants to be capable of startling any machine, he must have some effective procedure. But an effective procedure can be modelled by a machine. In Webb’s words: ‘Anti-mechanist arguments must either be ineffective, or else unable to show that their executor is not a machine.’ [WEB80, p. 232]

5 Gödel’s proof extended

Both Lucas and Webb claim that their results are derived from Gödel’s theorem. This, however, is slightly beside the truth. Rather than from Gödel’s theorem proper, Lucas and Webb derive their arguments from the construction by which Gödel proved his theorem. Hence, those arguments are vulnerable to alterations in the construction, as will be shown next.

In order to prove that there exist no complete, consistent mathematical systems, Gödel only needed to give a single counterexample for every thinkable system. This he did by diagonalizing the paradox of Epimenides, delivering [R(q);q]. But, as Gödel himself pointed out in the fourteenth footnote to his original article, any other epistemological antinomy will do as well. For the mathematical validity of his theorem this observation, of course, is not important (hence its exile to a footnote). However, in the context of artificial intelligence it is crucial.

The whole discussion as dealt with in the previous two sections centers on the observation that a TM may follow the recipe Gödel gives for constructing a counterexample showing the incompleteness of a formal system. However, since the TM cannot apply the meta-argument itself (because it is outside the formal system) it depends on additional information given to it by a human who applied the meta-argument. This in itself is not a problem, but it does mean that for every Gödelian formula the TM needs additional information. For every different Gödelian formula, the TM needs to be told that it actually is one.

Such different Gödelian formulas are fairly easy to produce. Let us, still following Gödel’s original notation, define a class L of natural numbers as follows: n ε L ≡ ¬(Bew[R(n);n]) v n=a, where a is a suitably chosen constant. That is, a is chosen such that Bew(R(a);a), ensuring that a is not in K. Effectively, L equals the original class K, augmented with a. Since L is defined in terms available in the system, just like in the case of K, there must be a formula that decides whether a given natural number belongs to L. This formula may be called R(s).

Now, since a is chosen such that K ≠ L, it follows that R(s) ≠ R(q). The proposition

[R(s);s] then leads to a paradox in exactly the same way as [R(q);q] does. This gives us a second Gödelian formula within Gödel’s original system. Furthermore, it is easily seen that there are infinitely many possibilities for a. To name just a few, a could be the sequence number in R of formulas like m=m, m+1=m+1 and m+m=2m. As a consequence it is possible to construct infinitely many different Gödelian formulas.

One may further extend Gödel’s construction by defining a class M of natural numbers: n ε M ≡ ¬(Bew[R(n);n]) v n=a1 v n=a2, where a1 and a2 are suitably chosen constants in the same sense as above. This once more produces an infinite range of Gödelian formulas.

Obviously one can further extend the construction by adding more disjunctions: n ε N ≡ ¬(Bew[R(n);n]) v n=a1 v n=a2 … v n=ak, where k is a natural number. Thus, in Webb’s metamathematical terms, one can effectively generate effective procedures for the construction of Gödelian formulas.

Now, it must be said that all the extensions given above are in principle mechanically reproducable. One may program a Turing Machine in such a way that it searches through all possible Gödelian formulas until it finds the one that was presented to it as a challenge by a human (note that this requires some clever diagonalization, for a TM searching through all possible classes L first would not terminate if the human constructed his Gödelian formula from some class of type M).

Hence, sheer computing power would still make the computer keep pace with the human. Actually, it would be quite a burden for a human to crack a Gödelian formula constructed by a computer according to one of the above recipes simply because he does not know which of them to apply (systematically checking all possibilities being a typical computer approach).

However, one might easily imagine more, even infinitely many recipes for Gödelian formulas. Let us, therefore, envisage a TM which has been augmented with all known recipes to generate Gödelian formulas. Using some pattern recognition features it is endowed with, this TM then states its suspicion that a certain formula (or range of formulas or recipe to generate this range) it can produce is a Gödelian formula. In order to prove this, it needs the meta-argument, but it only has the recipes. The TM may turn to a human for help, but this human can only conclude that the formula has not been constructed according to the recipes he is aware of. Hence the suspicion remains a suspicion; computer and human are equally ignorant.

This situation changes only if the human acknowledges the computer generated formula (recipe) as indeed a Gödelian formula (recipe for Gödelian formulas) by applying the meta-argument. On the other hand, if the human finds a new recipe for Gödelian formulas without the help of the TM, then the latter can only take it for granted if the human informs it.

According to this scheme of reasoning the human’s monopoly on the meta-argument seems to give him a perpetual lead, though the computer may always catch up. However, it is impossible to know for sure whether human imagination will always be able to disclose new Gödelian formulas. If (but only if) human imagination is unbound, there are always entirely new Gödelian formulas, untouched upon by man and machine, within the grasping of the human mind only. This, quite rightly, is an ineffective procedure. It gives no guarantees at all. Hence, Gödel’s theorem cannot be used conclusively to argue the superiority of the human mind over the machine, nor the equal capacities of both.

6 Conclusion

In the above it was shown that there are infinitely many proof constructions to Gödel’s theorem – in contrast to the single one that was used in discussions on artificial intelligence so far. Though all constructions that have been actually disclosed can be imitated by a computer, it is evident that there are constructions that have not been disclosed yet. Our analysis has shown that there might exist constructions that might only be discovered by a human. This is a small and definitely unprovable ‘maybe’ that depends on the limits of human imagination.

Hence, people arguing for the mathematical equivalence of humans and machines must ultimately rely on their belief in a limited mind, which implies that their conclusion is contained in their assumption. On the other hand, people advocating the superiority of humans must assume this superiority in their mathematical arguments, ultimately only deriving the conclusion that was already present in their system of reasoning from the very start.

So, it is not possible to produce (meta)mathematically sound arguments concerning the relation between the human mind and the Turing Machine without making an assumption on the human mind that is at the same time the conclusion of the argument. Therefore, the matter is undecidable.

References

[DAV65] Davis, M.(editor), The undecidable, basic papers on undecidable propositions,

unsolvable problems and computable functions. Hewlett (Raven), 1965.

[GEO62] George, F.H. ‘Minds, machines and Gödel: another reply to mr Lucas’. In: Philosophy,

vol 37 (1962), p. 62-63.

[GOE86] Gödel, K.F. Collected works, volume 1: publications 1929-1936. Oxford (University Press), 1986.

[GOL95] Goldstern, M., and H. Judah, The incompleteness phenomenon, a new course in mathematical logic. Wellesley (AK Peters), 1995.

[GOO67] Good, I.J., ‘Human and machine logic’. In: The British journal for the philosophy of science, vol 18 (1967-68), p. 144-147.

[GOO68] Good, I.J., ‘Gödel’s theorem is a red herring’. In: The British journal for the philosophy of science, vol 19 (1968-69), p. 357-358.

[HOF82] Hofstadter, D.R. and D.C. Dennett (editors), The mind’s I, fantasies and reflections on self and soul. London (Penguin), 1982. First edition: 1981.

[HOF85] Hofstadter, D.R., Gödel, Escher, Bach: een eeuwige gouden band. Amsterdam (Contact), 1985. First edition: 1979 (Gödel, Escher, Bach: an eternal golden braid).

[JON96] Jongeneel, C.J.B., The informatical world view, an inquiry into the methodology of computer science. PhD-thesis, Delft, 1996.

[LUC61] Lucas, J.R., ‘Minds, machines and Gödel’. In: Philosophy, vol 36 (1961), p112-127.

[LUC68] Lucas, J.R., ‘Human and machine logic: a rejoinder’. In: The British journal for the philosophy of science, vol 19 (1968-69), p. 155-157.

[SHA94] Shankar, N., Metamathematics, machines, and Gödel’s proof. Cambridge (University Press), 1994.

[WAN96] Wang, H., A logical journey, from Gödel to philosophy. Cambridge Mass. (MIT Press), 1996

[WEB80] Webb, J.C., Mechanism, mentalism and metamathematics. Dordrecht (Reidel), 1980.

[WHI62] Whiteley, C.H., ‘Minds, machines and Gödel: a reply to mr Lucas’. In: Philosophy, vol 37 (1962), p. 61-62.